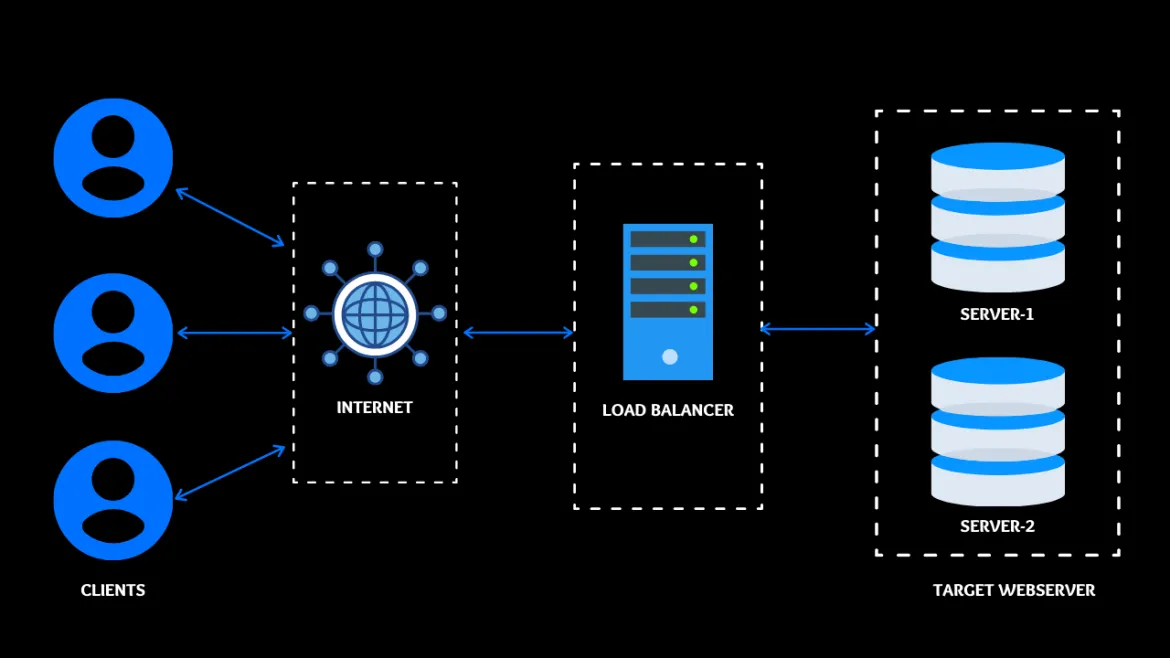

What is a load balancer? A load balancer is an efficient way to distribute the network traffic among various backend servers. It is also known as a server farm or server pool. It distributes client requests or network load to target web servers. Load balancers work on the round-robin concept, which ensures high reliability and availability.

One scenario

You have a web server that can manage 100 clients at a time. Suddenly the requests to that particular server increase by 100 percent. It's likely that the website will crash or be terminated. To avoid this situation, set up a target web server. In this scenario, the client never goes to the target web server. Instead, their request goes to the master server, and the master server sends the request to the target web server. When the target web server replies to the master web server, which is known as a reverse proxy.

[ You might also like: Turn a Kubernetes deployment into a Knative service ]

Using HAProxy as a proxy

The port on the main web server is called the frontend port. HAProxy is an HTTP load balancer that can be configured as a reverse proxy. Here we'll look at how I configured HAProxy by using an Ansible playbook.

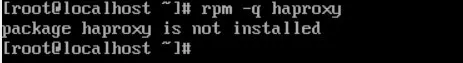

Check the system where you need to configure HAProxy

HAProxy is not installed on this system. You can confirm that with the following command:

rpm -q haproxy

Steps to configure HAProxy

Step 1 - Install HAProxy

To install HAProxy, you have to use a package module where you give the name of the service you want to install:

- name: "Configure Load balancer"

package:

name: haproxy

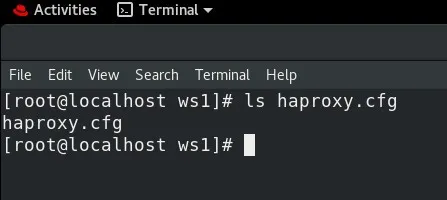

Step 2 - Copy the configuration file for the reverse proxy

Copy the configuration file so that you can modify it:

cp /etc/haproxy/haproxy.cfg /root/ws1/haproxy.cfg

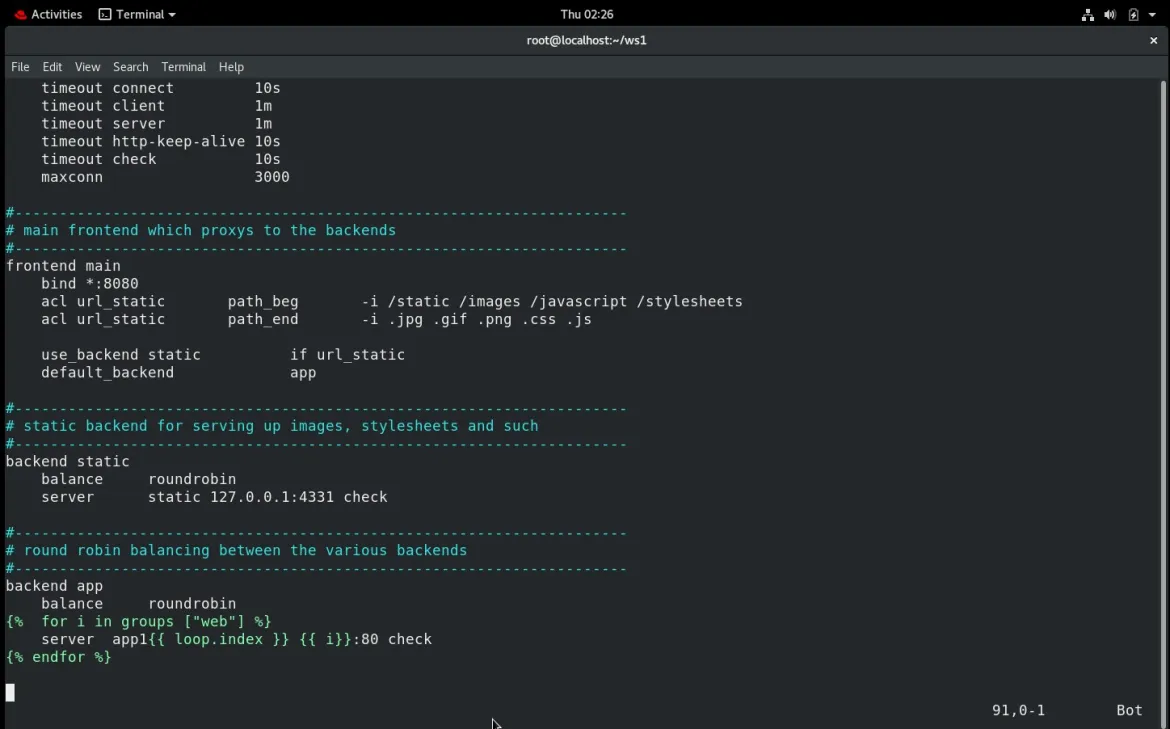

Step 3 - Change frontend port and assign backend IPs

By default, the frontend is bound to port 5000. I changed the port number to 8080. I also applied a for loop to configure the backend IP. Now you can launch as many web servers as you need, and there is no need to manually configure the IP inside the /etc/httpd/httpd.conf. It will automatically fetch the IP from inventory.

backend app

balance roundrobin

{% for i in groups ["web"] %}

server app1{{ loop.index }} {{ i}}:80 check

{% endfor %}

Step 4 - Copy haproxy.cfg to the managed node

Using template mode, copy the config file for HAProxy from the controller node to the managed node:

- template:

dest: "/etc/haproxy/haproxy.cfg"

src: "/root/ws1/haproxy.cfg"

Step 5 - Start the service

Use the service module to start the HAProxy service:

- service:

name: "haproxy"

state: restarted

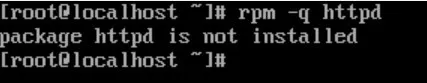

Check the system where you need to install httpd webserver

For testing the HAProxy configuration, you will also configure httpd on your target node with the help of Ansible. To check that you don't already have httpd on your system, use the following command:

rpm -q httpd

Step 1 - Install httpd

The package module is used to install httpd on the managed node:

- name: "HTTPD CONFIGURE"

package:

name: httpd

Step 2 - Copy the webpage

The template module is used to copy your webpage from the source to the destination:

- template:

dest: "/var/www/html/index.html"

src: "/root/ws1/haproxy.html"

Step 3 - Start the service

The service module is used to start the httpd service:

- service:

name: "haproxy"

state: restarted

Complete the playbook to configure the reverse proxy

In this playbook, you have two different hosts with two different groups. One group is for the web server, and another is for the load balancer:

---

- hosts: web

tasks:

- name: "HTTPD CONFIGURE"

package:

name: httpd

- template:

dest: "/var/www/html/index.html"

src: "/root/ws1/haproxy.html"

- service:

name: "httpd"

state: restarted

- hosts: lb

tasks:

- name: "Configure Load balancer"

package:

name: haproxy

- template:

dest: "/etc/haproxy/haproxy.cfg"

src: "/root/ws1/haproxy.cfg"

- service:

name: "haproxy"

state: restarted

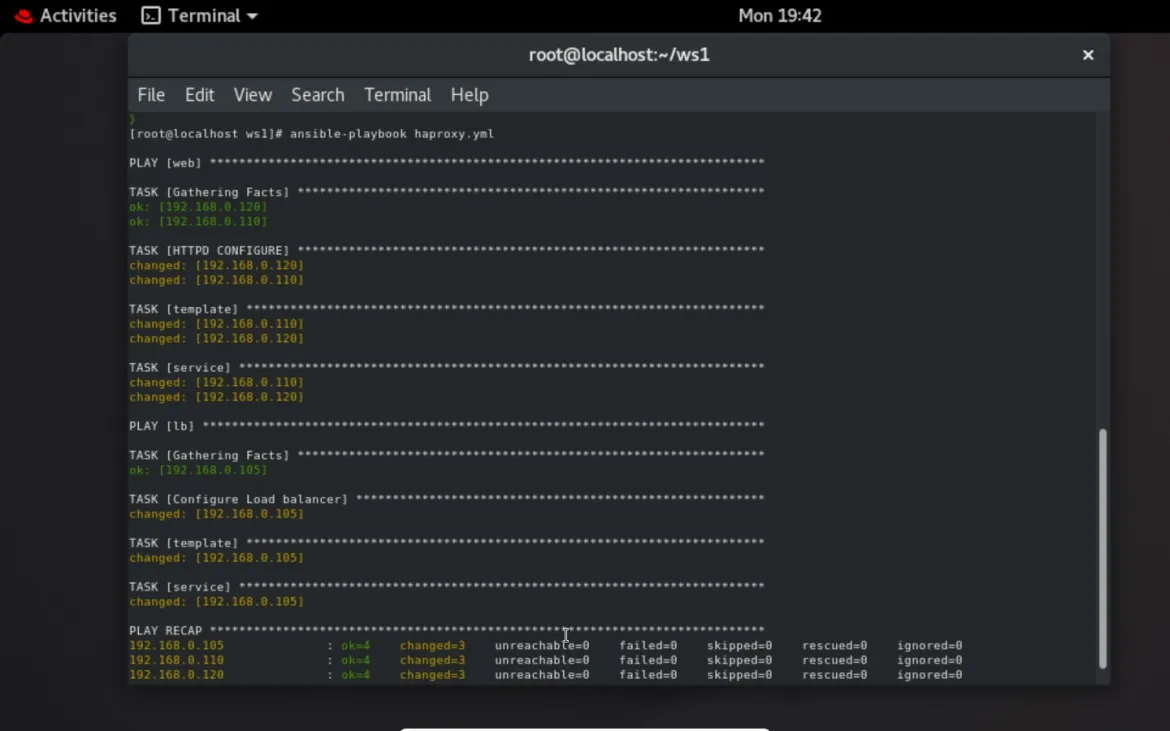

Run the playbook

ansible-playbook haproxy.yml

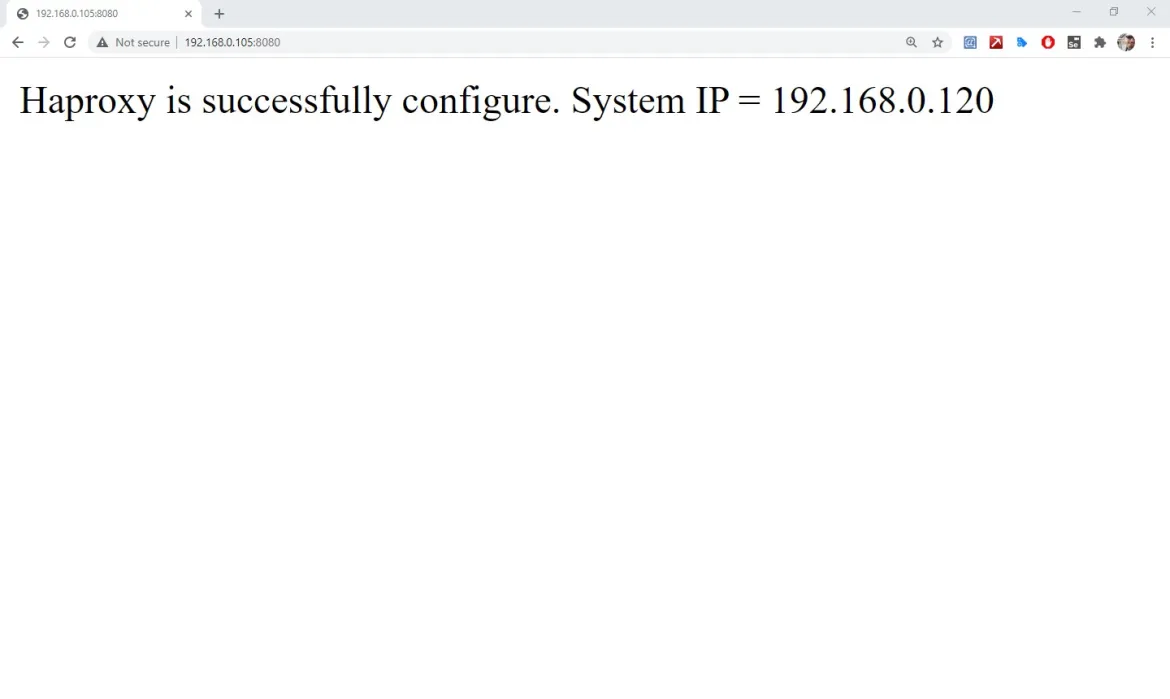

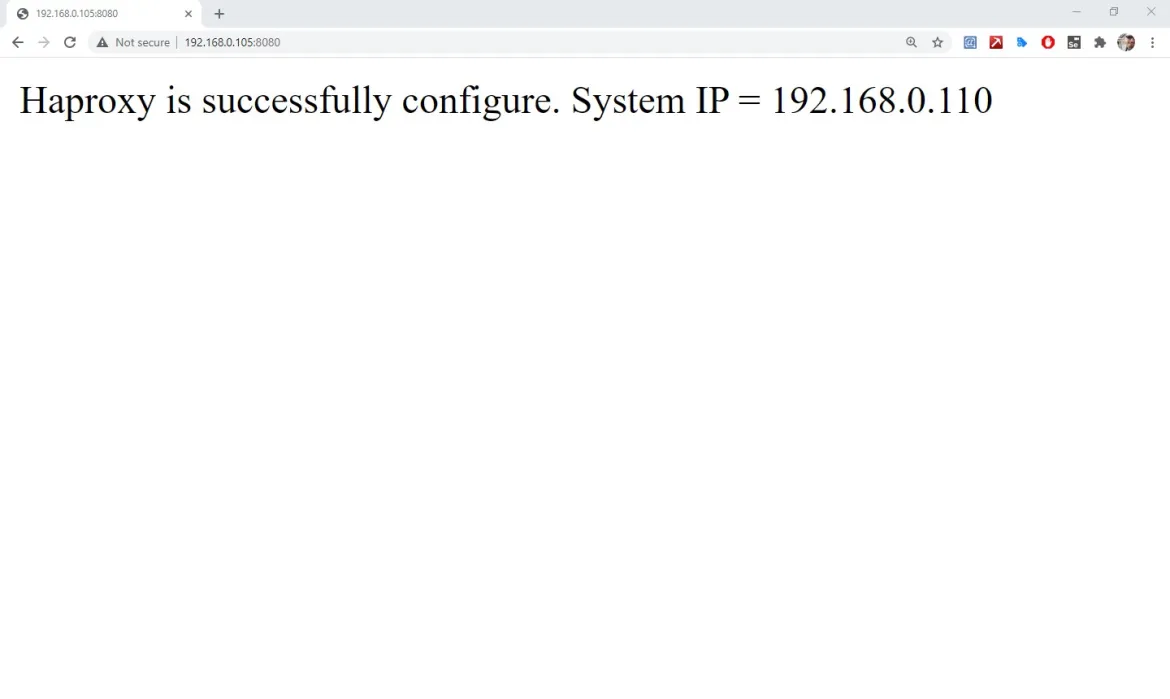

Output

The playbook runs successfully, and the two target web servers can be accessed by the main web server using a load balancer.

[ Looking for more on system automation? Get started with The Automated Enterprise, a free book from Red Hat. ]

Conclusion

The load balancer and reverse proxy have now been configured by Ansible. You can add a layer of protection and availability to your web services by adding HAProxy to your infrastructure. Be sure to check out the documentation for your specific target to learn more.

About the author

Sarthak Jain is a Pre-Final Year Computer Science undergraduate from the University of Petroleum and Energy Studies (UPES). He is a cloud and DevOps enthusiast, knowing various tools and methodologies of DevOps. Sarthak also Mentored more than 2,000 students Regarding the Latest Tech trends through their community Dot Questionmark.

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Customer support

- Developer resources

- Find a partner

- Red Hat Ecosystem Catalog

- Red Hat value calculator

- Documentation

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit