Red Hat Device Edge is a platform that meets the needs of workloads running on field-deployed devices with constrained resources. Designed for the smallest, most remote locations, Red Hat Device Edge combines Red Hat Enterprise Linux, Ansible Automation Platform, and Red Hat’s build of MicroShift.

MicroShift is a Kubernetes distribution optimized for small, resource constrained edge devices, and it brings orchestration from inside the data center to the furthest reaches of the edge. MicroShift 4.16 brings several new quality-of-life features that make operating a fleet of edge devices at scale easier. It provides simplified, direct updates for long-term support versions, along with the ability to connect to multiple networks to new application lifecycle management through GitOps, and much more. MicroShift 4.16 continues to help teams scale with consistent tooling and processes for disparate edge locations.

Let's take a look at a few of the most exciting features.

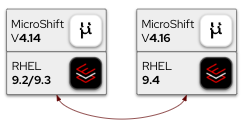

RHEL 9.4 and direct EUS to EUS upgrades

Let's start with the underlying operating system and potential upgrade paths. MicroShift 4.16 on Red Hat Enterprise Linux (RHEL) 9.4 is an extended update support (EUS) release. That's a switch from RHEL 9.2 and 9.3, which were supported in previous releases. Read the RHEL 9.4 release notes to see the changes in the operating system.

A direct EUS to EUS upgrade is supported, so you can upgrade directly from 4.14 to 4.16 with just a single reboot. By skipping 4.15, the downtime of edge deployments is minimized because only one restart is required (instead of two). On OSTree based systems, a rollback is also fully supported. If the Greenboot health check detects an unhealthy system, it automatically rolls back to the previous image (back at 4.14).

Even-number versions of MicroShift are EUS releases. With EUS, Red Hat provides backports of critical and important impact security updates and urgent-priority bug fixes. See the product life cycle page for exact dates and details.

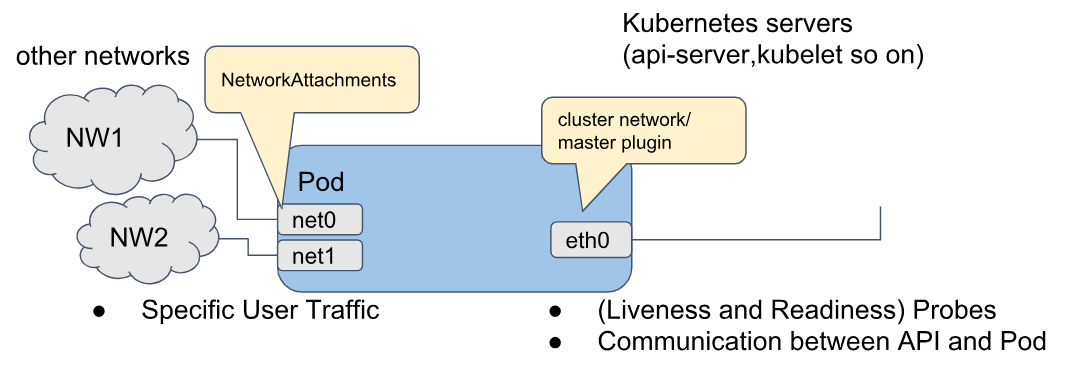

Attach a pod to multiple networks using Multus

Using multiple networks is now supported with the MicroShift Multus plugin. If you have advanced networking requirements you can attach additional networks to pods. A common use case for this is a pod that needs to connect to an operational network for industrial control systems or sensor networks.

You can install the optional Multus plugin on day 1 for a new installation, or later as a day 2 operation. Just add the RPM package microshift-multus to your deployment or image build.

After installing the MicroShift Multus RPM package, you can use the Bridge, MACVLAN, or IPVLAN plugins to create additional networks using the NetworkAttachmentDefinition API.

This is also good as an intermediate path to IPv6. MicroShift itself currently does not support IPv6, but full support for IPv6 is on the roadmap for the near future. Until then, you can use the bridge network plugin to connect a pod to a NIC with an IPv6 address.

Multiple Networks with MicroShift

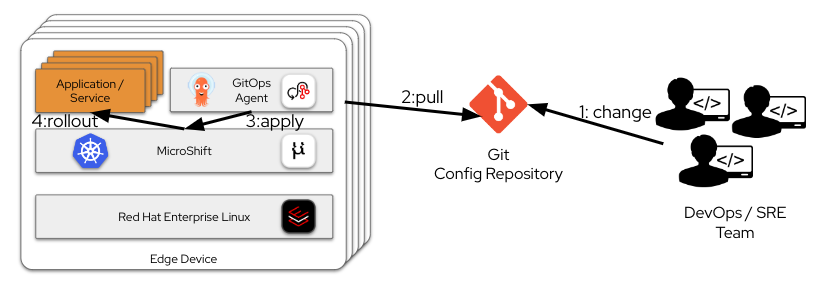

GitOps at scale with MicroShift (tech preview)

Thanks to our close collaboration with the OpenShift GitOps team, MicroShift now supports a small, lightweight GitOps agent running as an optional install. This enables consistent application lifecycle management at scale for edge deployments.

Managing a large fleet of edge devices can be challenging when compared to traditional, centralized computing. You probably have to contend with varying locations, connectivity issues, available staff, and differences in architecture. This can get even more complicated with microservices-based applications using a complex deployment. No matter how challenging, it's crucial that all deployments on all edge devices are on the same and consistent version.

In recent years, a GitOps-based approach has evolved as the gold standard solution to this challenge, in which a Git repository hosts the target configuration. The repo is changed using established Git workflows (for instance, pull requests are reviewed and then approved). In data center deployments, there's usually one central controller that reconciles the pending Git repository configuration with the actual current configuration in the clusters. Any difference is either reported or fixed by syncing to the cluster. This is called a push-based approach, because the central GitOps controller is pushing out the config changes to the managed clusters using its API endpoint. Argo CD is a prominent open source project that provides such a GitOps controller.

This has some drawbacks for edge deployments. Frequently, an edge device is behind a firewall, so the API endpoint isn't reachable from the core system. The reconciliation works only while there is connectivity available, so local changes (made by a human) aren't detected and corrected during offline periods.

These challenges can be solved by using a pull-based approach. In this model, endpoints reach out for pending updates. Each edge device gets its own local GitOps controller, which reconciles with the local cluster API and a remote Git repository. The pending configuration is pulled from Git when connectivity is available and cached locally. Then the reconciliation with the API server can still happen even when connectivity isn't available. Also, because a connection is initiated from the edge device to the central Git repository, this method is firewall friendly.

An important consideration for this approach is the GitOps controller running on the edge device. That consumes additional resources, so the key is to have a small and lightweight controller. And that's exactly what's available now for MicroShift. Add the microshift-gitops RPM package for a small lightweight Argo CD deployment on MicroShift.

The GitOps process

Custom API server certificates

The default API server certificate is issued by an internal MicroShift cluster certificate authority (CA). Clients outside the cluster can't easily verify the API server certificate. This can be a challenge when an API needs to be exposed, but self-signed certificates aren't allowed by security requirements.

The API server certificate can be replaced by custom server certificates issued externally by a custom CA that clients trust. Even multiple certificates for alternative names are possible. See the documentation here for details on how to configure this.

Ingress router controls

In previous versions, the ingress router was always on, listening for all available IPs on ports 80 and 443. This can be a problem in multi-homed edge devices, where ingress traffic may only be expected on a certain network. It also isn't in line with the security best practice to minimize attack surfaces.

Starting with MicroShift 4.16, admins can:

- Disable the router. There are use cases in which MicroShift is egress only. For instance, industrial IoT solutions where pods connect only to southbound shop floor systems and northbound cloud systems, there could be no inbound services at all. This minimizes the attack surface, and reduces resource consumption because the router pod is not started

- Configure which ports the router is listening on

- Configure which IP addresses and network interfaces the router is listening on. There are use cases in the industrial space where the router should be reachable only on internal shop floor networks, but not on northbound public networks (or the other way round, or both)

Configurable audit logging

Previously, MicroShift audit logging facility (which logs all API calls) used a hard-coded audit log policy and configuration. Starting with 4.16, audit logging can be configured in more detail, which can help you comply with your organization's audit logging rules.

Controlling the rotation and retention of the audit log file by using configuration values helps keep the limited storage capacities of far-edge devices from being exceeded. On such devices, logging data accumulation can limit host system or cluster workloads, potentially causing the device to stop working. Setting audit log policies can help ensure that critical processing space is continually available.

The values you set to limit audit logs enable you to enforce size, number, and age limits of audit log backups. Field values are processed independently of one another, without prioritization.

You can set fields in combination to define a maximum storage limit for retained logs. For example:

- Set both maxFileSize and maxFiles to create a log storage upper limit

- Set a maxFileAge value to automatically delete files older than the timestamp in the file name, regardless of the maxFiles value

Additionally, audit profiles can be configured to control the level of detail that gets written to the audit log.

MicroShift evolves

This article has only given you an overview of the many features of MicroShift. For a full list of new features in MicroShift 4.16, see the release notes, and read the documentation for even more detail.

À propos de l'auteur

Daniel Fröhlich works as a Global Principal Solution Architect Industry 4.0 at Red Hat. He considers himself a catalyst to bring together the necessary resources (people, technology, methods) to make mission-critical projects a success. Fröhlich has more than 25 years of experience in IT. In the past years, Daniel has been focusing on hybrid cloud and container technologies in the industrial space.

Contenu similaire

En savoir plus

- Livre numérique : Renforcez la sécurité, augmentez la flexibilité et évoluez en périphérie du réseau avec Red Hat Enterprise Linux

- Vidéo : Red Hat et Cisco : Étendre l'automatisation du réseau à la périphérie

- Témoignage client : Samsung accélère la 5G et les réseaux en périphérie avec Red Hat OpenShift

Parcourir par canal

Automatisation

Les dernières nouveautés en matière d'automatisation informatique pour les technologies, les équipes et les environnements

Intelligence artificielle

Actualité sur les plateformes qui permettent aux clients d'exécuter des charges de travail d'IA sur tout type d'environnement

Cloud hybride ouvert

Découvrez comment créer un avenir flexible grâce au cloud hybride

Sécurité

Les dernières actualités sur la façon dont nous réduisons les risques dans tous les environnements et technologies

Edge computing

Actualité sur les plateformes qui simplifient les opérations en périphérie

Infrastructure

Les dernières nouveautés sur la plateforme Linux d'entreprise leader au monde

Applications

À l’intérieur de nos solutions aux défis d’application les plus difficiles

Programmes originaux

Histoires passionnantes de créateurs et de leaders de technologies d'entreprise

Produits

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Services cloud

- Voir tous les produits

Outils

- Formation et certification

- Mon compte

- Assistance client

- Ressources développeurs

- Rechercher un partenaire

- Red Hat Ecosystem Catalog

- Calculateur de valeur Red Hat

- Documentation

Essayer, acheter et vendre

Communication

- Contacter le service commercial

- Contactez notre service clientèle

- Contacter le service de formation

- Réseaux sociaux

À propos de Red Hat

Premier éditeur mondial de solutions Open Source pour les entreprises, nous fournissons des technologies Linux, cloud, de conteneurs et Kubernetes. Nous proposons des solutions stables qui aident les entreprises à jongler avec les divers environnements et plateformes, du cœur du datacenter à la périphérie du réseau.

Sélectionner une langue

Red Hat legal and privacy links

- À propos de Red Hat

- Carrières

- Événements

- Bureaux

- Contacter Red Hat

- Lire le blog Red Hat

- Diversité, équité et inclusion

- Cool Stuff Store

- Red Hat Summit