Everyone declared 2024 the year of generative AI (gen AI). But behind the scenes, another shift was unfolding—one that could reshape IT infrastructure for years to come. Sparked by escalating costs and risks, virtualization became a topic of interest and one of necessity by both leadership and infrastructure teams.

Organizations began looking to migrate their virtual machines (VMs) to a more modern virtualization platform. By migrating to a new platform, organizations can accelerate application delivery, reduce operational costs and minimize risks like vendor lock-in. Yet, while much of the virtualization discussion has revolved around compute, storage is often the linchpin of a successful migration, directly impacting performance, scalability and reliability.

Red Hat OpenShift Virtualization extends the capabilities of Red Hat OpenShift, providing an open source approach to managing both virtualized and containerized workloads. In this blog post, you’ll learn how OpenShift Virtualization handles storage and key considerations. Then, I’ll demo how Red Hat and its partner Lightbits Lab helps organizations define the right strategy for their workloads.

While every customer's journey is unique, there are core fundamental considerations when planning and architecting the next generation of an organization’s virtualization infrastructure. These generally revolve around the capabilities of the virtualization technology itself, capacity and scale, the ISV (Independent Software Vendor) ecosystem, networking capabilities and storage integration.

Storage types for VMs

In traditional virtualization, storage is typically attached via storage area network (SAN) over Fibre Channel (FC), iSCSI (Internet Small Computer Systems Interface) or a Network File System (NFS). These storage technologies have been the backbone of many virtualization deployments due to their reliability and performance. However, as organizations move toward modernization, storage considerations must evolve to meet new demands for increased flexibility, scale and cost efficiency.

When transitioning to OpenShift Virtualization, organizations must evaluate how different storage types—file system storage and block storage—align with their workload needs. Both storage types serve as back-end storage for VMs, but they differ in performance, access patterns and use cases.

OpenShift Virtualization supports two primary types of storage for virtual machines (VMs): file system storage and block storage.

- File system storage (e.g., NFS) is preformatted and shared across multiple nodes. It allows concurrent write access and is ideal for shared data workloads

- Block storage provides raw storage volumes that require a file system. Unlike file storage, block storage is typically dedicated to a single workload and is well-suited for databases, low-latency applications and high-IOPS scenarios

Natively, a block device doesn’t support multiwrite; this capability has to be provided by a clustered file system on top or, in the case of OpenShift Virtualization, through the CSI (Container Storage Interface) driver. Each CSI driver is designed to interface with a specific storage protocol or vendor solution, enabling OpenShift Virtualization to integrate efficiently with external storage platforms. While NFS, iSCSI and Fibre Channel have been dominant in traditional environments, modern solutions—like NVMe over TCP—are gaining traction for their cost efficiency and high performance. This shift is driven by advancements in software-defined storage and the need for flexible, scalable storage architectures. Choosing between file system and block storage still depends on workload requirements, with block storage the ideal system for databases and high-performance applications and file system storage for shared, lower-cost storage needs.

As mentioned earlier, the most common protocol for block storage we encounter with customers is SAN storage attached via Fibre Channel. This is primarily due to the bandwidth limitations of traditional networking equipment and the capabilities and robustness of storage arrays on a SAN via Fibre Channel. While Fibre Channel arrays have traditionally provided much better reliability, robustness, iOPS, latency and advanced features, advancements in networking and software-defined storage are changing how we think about designing our storage networks for the next generation of virtualization. The other benefit of designing software-defined storage networks is cost, with ethernet-based storage technologies significantly more cost-effective than the prior generation of SAN-based storage. They are also much easier to scale. Examples of network-based, software-defined storage that provides block volumes include, Ceph, iSCSI and NVMe over TCP. One of the new and emerging areas of storage we see is the growth of NVMe over TCP. Due to its ability to provide high performance at a substantially lower cost than other similarly performant storage technologies while utilizing existing ethernet infrastructure, NVMe over TCP is emerging as a viable alternative to traditional SAN-based storage for similar workloads that require block devices.

OpenShift Virtualization can utilize storage presented as block devices or as a formatted file system for storage attached as disks to VMs. These disks can be for the OS/boot volume or data volumes, and choosing between the two is primarily determined by the use case.

Incorporating storage into an OpenShift Virtualization environment

To demonstrate how to incorporate storage into an OpenShift Virtualization environment, I will use Red Hat partner,Lightbits Labs. Their approach to software defined storage using NVMe over TCP is interesting, especially for persistent and sustained high performant workloads that traditionally needed a SAN-based fabric to run.

Organizations must install the appropriate CSI driver to effectively integrate storage with OpenShift Virtualization. In the case of Lightbits Labs, once the storage is connected and network access is configured, the next step is installing its CSI driver. This can be done manually or, more efficiently, through the OperatorHub—a built-in marketplace in OpenShift that simplifies the deployment of certified Operators.

Operators in OpenShift Virtualization automate the installation, configuration and lifecycle management of software components, reducing manual intervention and ensuring uninterrupted integration. The Lightbits Labs CSI driver is available as an Operator, allowing administrators to deploy it with a few clicks while ensuring compatibility and lifecycle management through OpenShift. This approach enhances operational efficiency and accelerates storage provisioning in OpenShift Virtualization environments.

Once the CSI Driver is installed, the csi controller and csi node are visible and running. The next step is to configure a secret and the storage class. The secret provides encrypted authorization credentials for protected storage. A StorageClass in OpenShift is like a datastore policy that defines how storage is provisioned, including the location of storage, type of storage, performance characteristics and behavior (e.g., thin or thick provisioning). It acts as a blueprint for dynamically creating and managing storage volumes, similar to how storage profiles abstract logical unit number (LUN) or datastore configurations in traditional virtualization environments. An OpenShift Virtualization environment can have many storage classes, but one way to improve efficiency is to designate a default storage class that will be used as the backing for the operating system (OS) disks.

OpenShift Virtualization provides a flexible approach to VM creation, whether using a traditional ClickOps approach or a modernized GitOps pipeline to manage virtual machine lifecycles. OpenShift and Kubernetes allow VMs to live side by side with containers, allowing for unique advantages such as streamlined infrastructure and operations costs. While there are a few differences between managing and lifecycling containers versus managing and lifecycling VMs, the important one for this conversation is live migration. Virtual machines are expected to maintain state, throughput and connectivity when moving between hosts. In contrast, containers are generally instantiated on a new host, and once up and running, connectivity is cut over. Again, using NVMe over TCP allows for VMs to sustain high throughput of IOs not only during regular operations, but during the live migration as well, which is one of the strengths of Lightbits Labs.

Benefits of Lightbits Labs with Red Hat OpenShift Virtualization

One of the biggest challenges in virtualization is maintaining high-performance storage while enabling live migration. Traditionally, Fibre Channel-based SANs have provided the necessary reliability and throughput for live migration, but modern software-defined storage solutions are changing how organizations design their storage infrastructure.

One of the benefits of using Lightbits Labs' NVMe/TCP architecture is that it enables NVMe/TCP to provide a high-performance storage transport over standard Ethernet, eliminating the need for proprietary SAN fabrics while still delivering the low latency and high IOPS required for critical workloads. Founded by the inventors of NVMe/TCP, Lightbits Labs’ architecture is designed to maximize throughput with dedicated IO queues that allow multiple streams of IO to be handled efficiently.

Each Lightbits Labs storage target (server in the Lightbits cluster) can deliver over 4 million IOPS, meaning a minimal cluster of just 3 nodes can exceed 12 million IOPS. This high-performance capability ensures that live migration of VMs in OpenShift Virtualization is effective, even for data-intensive workloads. Unlike traditional SANs, which can introduce bottlenecks during live migration, Lightbits Labs’ NVMe/TCP solution enables sustained high throughput and low latency during migration events, helping to maintain application performance and data integrity.

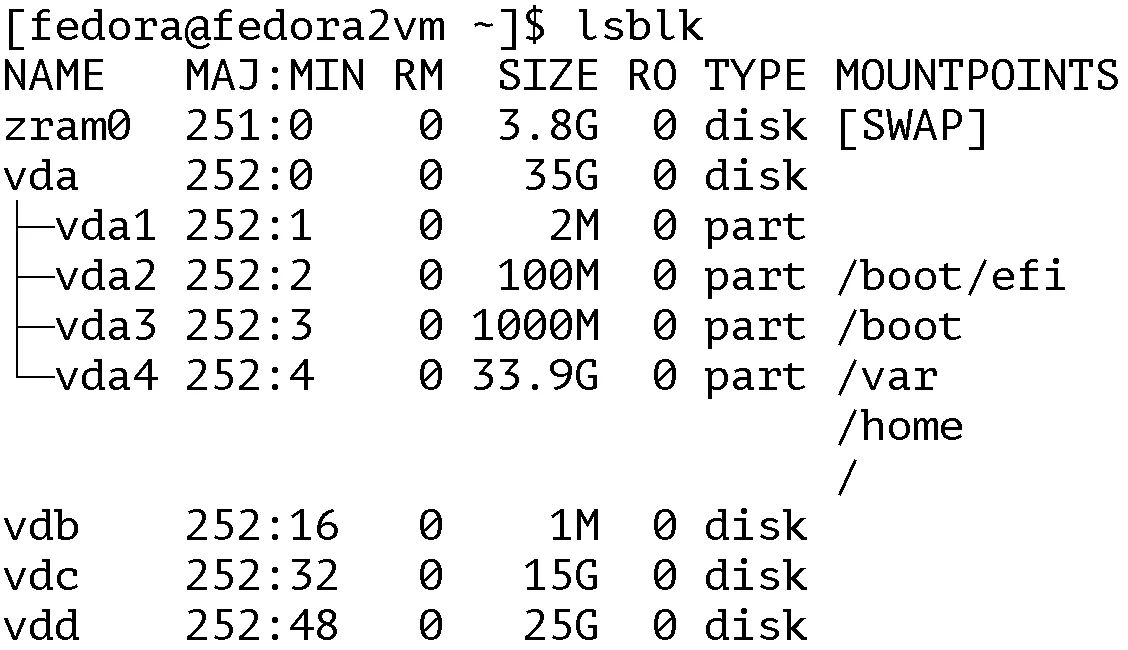

In a recent demonstration by the Lightbits Labs team, they showed how VMs backed by Lightbits Labs storage were able to sustain consistent IOs while being migrated. They used a VM backed by 3 persistent volume claims (PVCs)—one for the operating system (vda) and the other 2 (vdc & vdd) for the application.

Disk layout of the VMs:

The demo shows how a Flexible I/O Tester (FIO) script being run on the VM will create IOs (70r/30w) against the 2 data disks (vdc & vdd). FIO is a powerful disk benchmarking and performance testing tool used in Linux.

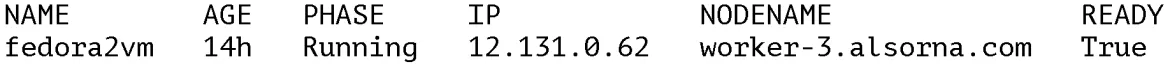

The VM that has the script running resides on the worker-3.alsorna.com below:

There are multiple ways to enable a live migration within OpenShift Virtualization. They are:

- Web console utilizing a ClickOps approach

- Command line using virtctl migrate <vm-name>

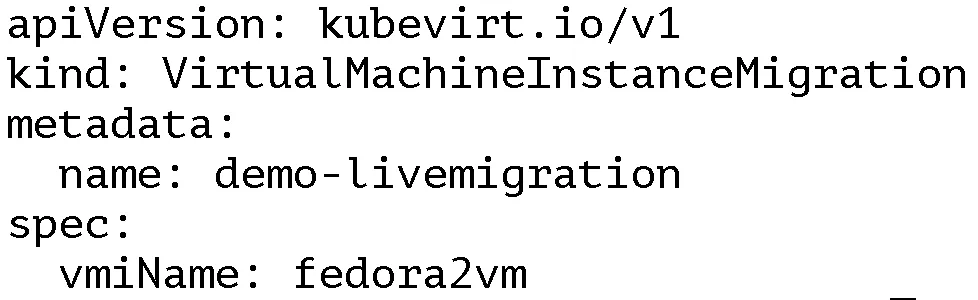

- YAML, creating a VirtualMachineInstanceMigration object that will initiate the live migration

Below is the VirtualMachineInstanceMigration YAML to be used to initiate the migration for this demo:

Once the live migration completes, the VM’s new host is verified to confirm that it’s on the new worker node in the cluster. The output shows that it’s now on worker-2.alsorna.com

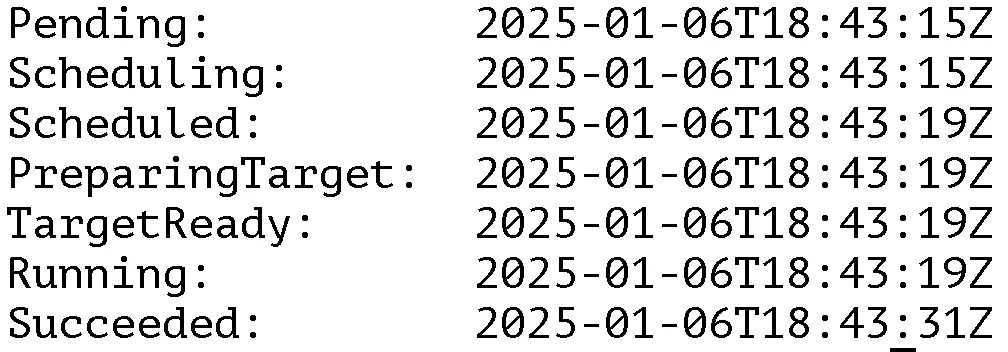

The demo then goes on to confirm how long it took the VM to live migrate:

# oc get VirtualMachineInstanceMigration demo-livemigration -o json | jq -rc '.status.phaseTransitionTimestamps[] | [.phase, .phaseTransitionTimestamp]' | tr -d '[]"' | awk -F, '{print $1": "$2}' | column -t

The output shows that it took around 4 seconds to migrate the VM from the “Scheduling” state to “Running” state and 16 seconds to the “Succeeded” state.

Let’s look now at the FIO numbers and compare the same run of 120 seconds using 70%read / 30%write random IOs:

Read IOPS | Read Latency(us) | Write IOPS | Write Latency (us) | |

vdc no migration | 4946.28 | 575.14 | 2121.61 | 509.79 |

vdd no migration | 5146.51 | 551.23 | 2203.45 | 491.39 |

vdc during migration | 4902.99 | 584.93 | 2104.91 | 515.28 |

vdd during migration | 5097.07 | 562.25 | 2189.2 | 499.34 |

The results of the FIO script show (as expected) a tiny decrease in IOPS and a tiny bump in latency, between a regular run of FIO (VM is not migrating) and a run that continuously sends IOs to the Lightbits storage during the live migration.

Next steps

While this demo is fairly simple in execution, it’s extremely powerful and scalable allowing the testing of more complex and data-intensive workloads. As network bandwidth advances and Ethernet technology innovations continue, organizations will move away from the constrained limitations of traditional fibre channel SAN to more flexible and scalable network based storage, with NVMe/TCP a clear frontrunner. In my example, the Lightbits Labs team showed how their storage is able to minimize the impact of live migration and provide continuous and stable access to the backend storage, allowing for the uninterrupted running of VMs on Red Hat OpenShift Virtualization. Check out Lightbits Labs in the Ecosystem Catalog for your OpenShift Virtualization cluster today.

product trial

Red Hat OpenShift Container Platform | Teste de solução

Sobre o autor

Mais como este

Navegue por canal

Automação

Últimas novidades em automação de TI para empresas de tecnologia, equipes e ambientes

Inteligência artificial

Descubra as atualizações nas plataformas que proporcionam aos clientes executar suas cargas de trabalho de IA em qualquer ambiente

Nuvem híbrida aberta

Veja como construímos um futuro mais flexível com a nuvem híbrida

Segurança

Veja as últimas novidades sobre como reduzimos riscos em ambientes e tecnologias

Edge computing

Saiba quais são as atualizações nas plataformas que simplificam as operações na borda

Infraestrutura

Saiba o que há de mais recente na plataforma Linux empresarial líder mundial

Aplicações

Conheça nossas soluções desenvolvidas para ajudar você a superar os desafios mais complexos de aplicações

Programas originais

Veja as histórias divertidas de criadores e líderes em tecnologia empresarial

Produtos

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Red Hat Cloud Services

- Veja todos os produtos

Ferramentas

- Treinamento e certificação

- Minha conta

- Suporte ao cliente

- Recursos para desenvolvedores

- Encontre um parceiro

- Red Hat Ecosystem Catalog

- Calculadora de valor Red Hat

- Documentação

Experimente, compre, venda

Comunicação

- Contate o setor de vendas

- Fale com o Atendimento ao Cliente

- Contate o setor de treinamento

- Redes sociais

Sobre a Red Hat

A Red Hat é a líder mundial em soluções empresariais open source como Linux, nuvem, containers e Kubernetes. Fornecemos soluções robustas que facilitam o trabalho em diversas plataformas e ambientes, do datacenter principal até a borda da rede.

Selecione um idioma

Red Hat legal and privacy links

- Sobre a Red Hat

- Oportunidades de emprego

- Eventos

- Escritórios

- Fale com a Red Hat

- Blog da Red Hat

- Diversidade, equidade e inclusão

- Cool Stuff Store

- Red Hat Summit